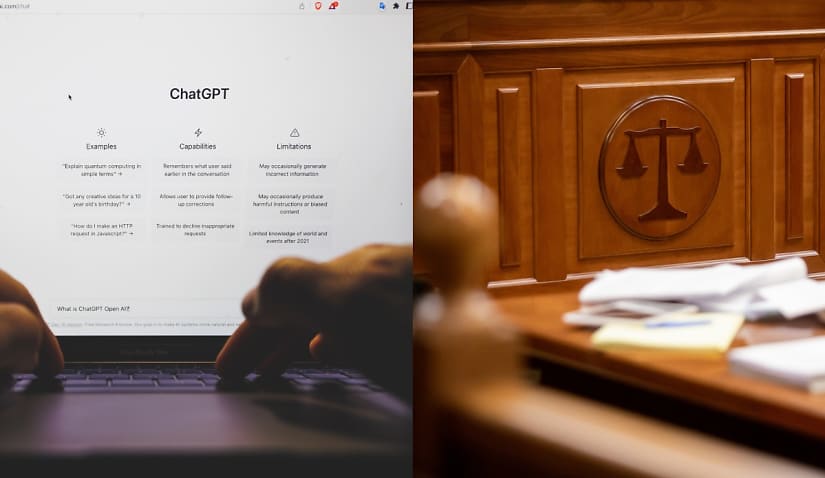

ChatGPT was recently used as part of a judgment in Colombia, sparking a global debate on how artificial intelligence (AI) technologies can and should be used.

Judge Juan Manuel Padilla Garcia recently used ChatGPT in a court decision, making global headlines. However, those in the legal profession have reported a number of risks associated with AI technologies, particularly when used within workplaces.

Garcia J, of the First Circuit Court in the city of Cartagena, used the bot when presiding over a case involving an autistic child — and whether that child’s insurance should pay for the entirety of his medical expenses and transport costs.

The judge asked ChatGPT legal questions, such as “Is an autistic minor exonerated from paying fees for their therapies?” and “Has the jurisprudence of the constitutional court made favourable decisions in similar cases?”, according to the judgment.

Universities across Australia have already expressed concerns about the bot being used to cheat within schools, with a recent panel discussion at UNSW emphasising the importance of teaching students to use AI tools “ethically, morally and legally”. But with the bot now being used in court, lawyers’ skills need to evolve with AI technology — something PwC NewLaw director Peter Dombkins said would take time.

“The capabilities of AI-enabled technologies are evolving much faster than the institutional and regulatory frameworks within which the justice sector operates — ranging from education to the daily interactions and activities of lawyers and their clients, and even to the operation of the courts.

“Ultimately, what ChatGPT has recently done through its accessible interface, is made AI more accessible and tangible to a much broader segment of the legal profession (e.g. beyond eDiscovery and due diligence teams who are already familiar with AI-enabled document review), and in doing so has opened up a variety of discussions in regards to if, how and should AI be incorporated into legal work.

“If nothing else, ChatGPt has helped unlock the legal profession’s curiosity and excitement about AI and technology. ChatGPT has prompted a conversation that needed to happen, and a timely conversation in light of the more significant advances already promised by GPT4.”

As reported by VICE, Garcia J stated in his judgment that “the arguments for this decision will be determined in line with the use of artificial intelligence (AI)”.

“The purpose of including these AI-produced texts is in no way to replace the judge’s decision. What we are really looking for is to optimise the time spent drafting judgments after corroborating the information provided by AI,” Garcia J stated.

In conversation with Lawyers Weekly, Australian Family Lawyers principal lawyer Justin Dowd said that AI technologies can be used effectively “in aid of the work of the courts” but must be done with caution.

“It is an essential principle of the rule of law that persons involved in court proceedings are aware of all matters being considered (the evidence) and have the opportunity to answer those matters if required. It is a breach of that rule for a judge to take into account any matters not properly brought in open court. As an example, in the recent rape trial in Canberra that received much publicity, one juror was looking at material that was not in evidence. This breach was so serious that the trial was aborted,” he explained.

“In the case of the Colombian judge, there would be no breach of the rule of law if that judge had indicated in open court that he had done certain research, and then invited evidence or submissions to answer that material.”

ChatGPT’s response ultimately matched with the judge’s final decision — that “according to the regulations in Colombia, minors diagnosed with autism are exempt from paying fees for their therapies”.

This is reportedly the first time an AI tool of any kind has been used to make a court ruling — and as reported by The Guardian, Garcia J has since defended his use of the bot, maintaining that “by asking questions to the application, we do not stop being judges, thinking beings” and that ChatGPT had been useful to merely “facilitate the drafting of texts” but not to replace judges entirely.

However, those in the Australian legal profession have voiced concerns over the use of ChatGPT, which Keypoint Law consulting principal Philip Argy said was “no different to Wikipedia in being a massive database of information and curated based on undisclosed criteria”.

This is something Mr Dowd echoed. ChatGPT collates information from every corner of the internet to generate responses to prompts — but has been shown to provide varying answers to different questions as well as make simple mistakes.

“The problem with AI is the ‘garbage in, garbage out’ principle; the information you get back is only as good as the input. I think it is positive that the courts, lawyers and litigants have as many avenues as possible to consider their circumstances. It would, however, be negative if over-reliance was placed on untested information.

“Some programs exist and can give guidance on the law, the applicable procedures and similar factual scenarios. This is really advanced Wikipedia, and again, care has to be taken in the use of and reliance on such material,” he said.

“The courts can make directions about the use of AI material, including giving notice of the intention to use it in particular. AI could be very useful and time-efficient if it is concerned with factual information, and AI can be used for that type of information.

“Programs can be developed to do analyses of particular fact situations as well, and to set out the laws on any particular point. Anything that requires interpretation, or the use of judicial discretion should, in my view, be approached with great caution.”

Lauren is the commercial content writer within Momentum Media’s professional services suite, including Lawyers Weekly, Accountants Daily and HR Leader, focusing primarily on commercial and client content, features and ebooks. Prior to joining Lawyers Weekly, she worked as a trade journalist for media and travel industry publications. Born in England, Lauren enjoys trying new bars and restaurants, attending music festivals and travelling.

Want to see more stories from trusted news sources?

Make Lawyers Weekly a preferred news source on Google.

Click here to add Lawyers Weekly as a preferred news source.